In the world of search engine optimization (SEO) and content creation, one term that often buzzes around is Latent Semantic Indexing (LSI).

But what does it mean? And why should you pay attention to it?

Dive into this article to uncover seven crucial facts about LSI, shedding light on its origins, significance, and the transformative impact it can have on digital strategies.

Whether you’re an SEO novice or a seasoned professional, understanding the intricacies of LSI can elevate your content game and enhance your website’s visibility in the vast digital ocean.

- What Is Latent Semantic Indexing?

- Keyword Analysis vs Latent Semantic Indexing

- Latent Semantic Indexing and Topical Authority

- Latent Semantic Indexing and Vector Analysis

- Does Google Use Latent Semantic Indexing?

- How Can LSI Help You Rank Better In Google?

- Google: There Is No Such Thing As LSI Keywords

- Conclusion

- Related Resources

What Is Latent Semantic Indexing?

Latent Semantic Indexing is a mathematical method for finding patterns in the way that words cluster together in online content. That information is then indexed so that it can be used to answer queries.

To put it another way, latent semantic indexing studies the co-occurrence of words. By doing that, it finds the hidden (latent) relationships between words which in turn allows it to understand meaning (semantics).

Latent semantic indexing was a major step forward for the field of text comprehension because it takes account of the fact that the meaning of words changes depending on context.

Here are some examples:

- Arms bend at the elbow.

- Germany sells arms to Saudi Arabia.

- Work out the solution in your head.

- Heat the solution to 75° Celsius.

- The key broke in the lock.

- The key problem was not one of quality but of quantity.

At the heart of latent semantic indexing is a theory called the Distributional Hypothesis. According to this theory, words that occur in the same context tend to have similar meanings. As one linguist put it: “You shall know a word by the company it keeps.”

In short, words that share similar contexts tend to have similar meanings.

“You shall know a word by the company it keeps.”

J. R. Firth, 1957

Keyword Analysis vs Latent Semantic Indexing

So how does this relate to search engines?

In the late 1990s, when the first search engines appeared, keyword density was the only measure of relevance that was available. The more times a keyword appeared in a piece of content, the more relevant it was to the search query.

.Of course, keyword density failed to understand context. And it was also easy to manipulate. Websites would rank high in the search results by stuffing their content with a given keyword.

But when latent semantic indexing appeared on the scene, keyword stuffing was no longer effective.

Why?

Because with latent semantic indexing, search engines are not looking for a single keyword – they’re looking for patterns of keywords.

To put it another way: search engines are moving away from keyword analysis towards topical authority. The practice of optimizing content for topical authority rather than keyword density is called semantic SEO.

Latent Semantic Indexing and Topical Authority

Topical authority is becoming a major ranking factor for search engines. On Google, for example, you can outrank websites with much higher domain authority (i.e. websites with a much stronger link profile) by creating content that has very high topical authority.

It’s important to note here that the concept of topical authority applies to both web pages and to websites as a whole.

A web page that explores every aspect of a topic in depth can be said to have high topical authority.

Similarly, a website that covers a niche comprehensively has high topical authority.

For example, if all you write about is 1930s jazz music, your website will have very high topical authority on that topic. When you publish articles on that topic, your web page will likely rank very high. Your website might outrank other sites that have many more backlinks.

But if your website covers every genre and era of jazz that’s ever existed, your web page on 1930’s jazz probably won’t rank as high as the other website’s article. The reason being, the second website lacks topical authority for ‘1930s jazz’.

Latent Semantic Indexing and Vector Analysis

We’ve talked a lot about latent semantic indexing. But it’s not the only tool that computers are using to try to understand the meaning of words.

There’s also a thing called vector analysis.

So what is vector analysis when it’s applied to words?

A word vector is a row of mathematical values associated with a single word. Each value in the row captures a dimension of the word’s meaning.

Here’s an example:

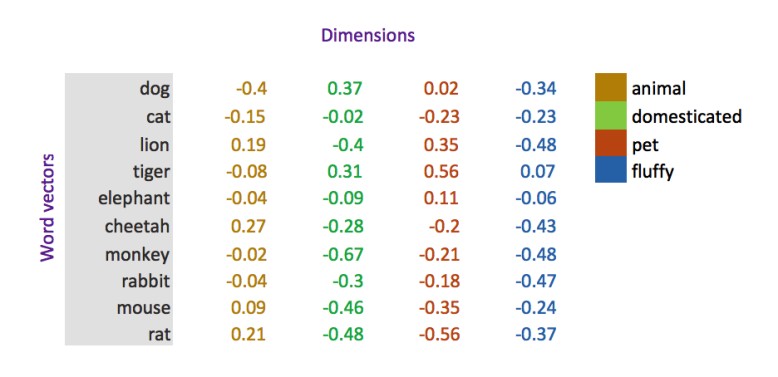

(Source)

Each number in the row attempts to encapsulate the meaning of the word according to one of four different vectors (animal, domesticated, pet, fluffy).

The difference between latent semantic indexing and word vectors is that LSI is a count-based model – it simply counts how many times words occur in a certain context. But word vectors are a prediction-based model – they attempt to predict the meaning of a word, based on vector analysis.

For example, through vector analysis, the Google algorithm “understands that Paris and France are related the same way Berlin and Germany are (capital and country), and not the same way Madrid and Italy are”

Does Google Use Latent Semantic Indexing?

This is where the controversy begins.

Latent Semantic Indexing as ‘Old Technology’

Lately, a number of articles have appeared online claiming that Google doesn’t use latent semantic indexing. Some of them go further and claim that understanding how LSI works is not going to help your SEO.

Of course, no one outside Google knows exactly what the Google algorithm does.

But let’s look at the likelihood (or otherwise) that Google uses latent semantic indexing.

Some have argued that because LSI was developed in the 1980s, it’s ‘old technology’ and it’s therefore unlikely that Google uses LSI in its algorithm.

There’s a problem with this argument.

The date that LSI was discovered is irrelevant to whether it is being used by Google today.

Indeed, the date that any technology was discovered has no bearing on whether we still use it today.

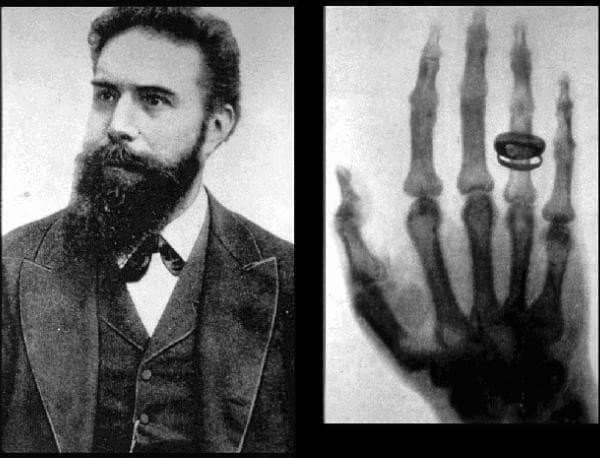

Wilhelm Conrad Roentgen, discoverer of x-rays

(Source)

For example, x-rays were discovered in 1895 (by Wilhelm Conrad Roentgen, Professor at Wuerzburg University in Germany). So strictly speaking they are ‘old technology’.

But it would be absurd for hospitals to say: “because x-rays are based on old technology, we won’t be using them anymore”.

Here’s another example, closer to home.

Gottfried Wilhelm Leibniz, inventor of the binary system

(Source)

Computers are based on a binary system, where all data is reduced to a ‘0’ or a ‘1’.

The binary system was invented by Gottfried Wilhelm Leibniz, who published his invention in a 1701 paper titled: ‘Essay d’une nouvelle science des nombres’.

So you could argue that modern computers are based on an 18th Century invention.

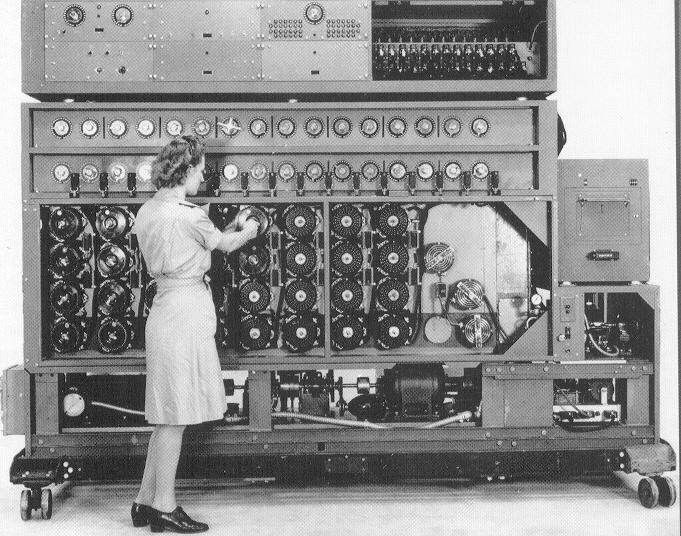

The Turing machine, forerunner of the modern computer

(Source)

Some people argue for a more recent origin. They trace the modern computer to Alan Turing’s 1936 invention of the ‘universal machine’ (now called the Turing machine).

Either way, computers are based on ‘old technology’ (1701 or 1936 depending on your perspective).

So the fact that LSI was discovered in the 1980’s is neither here nor there – it doesn’t mean that LSI is no longer relevant or useful.

Google’s 2009 Patent Application

As I said, Google is very cagey about how it’s algorithms work.

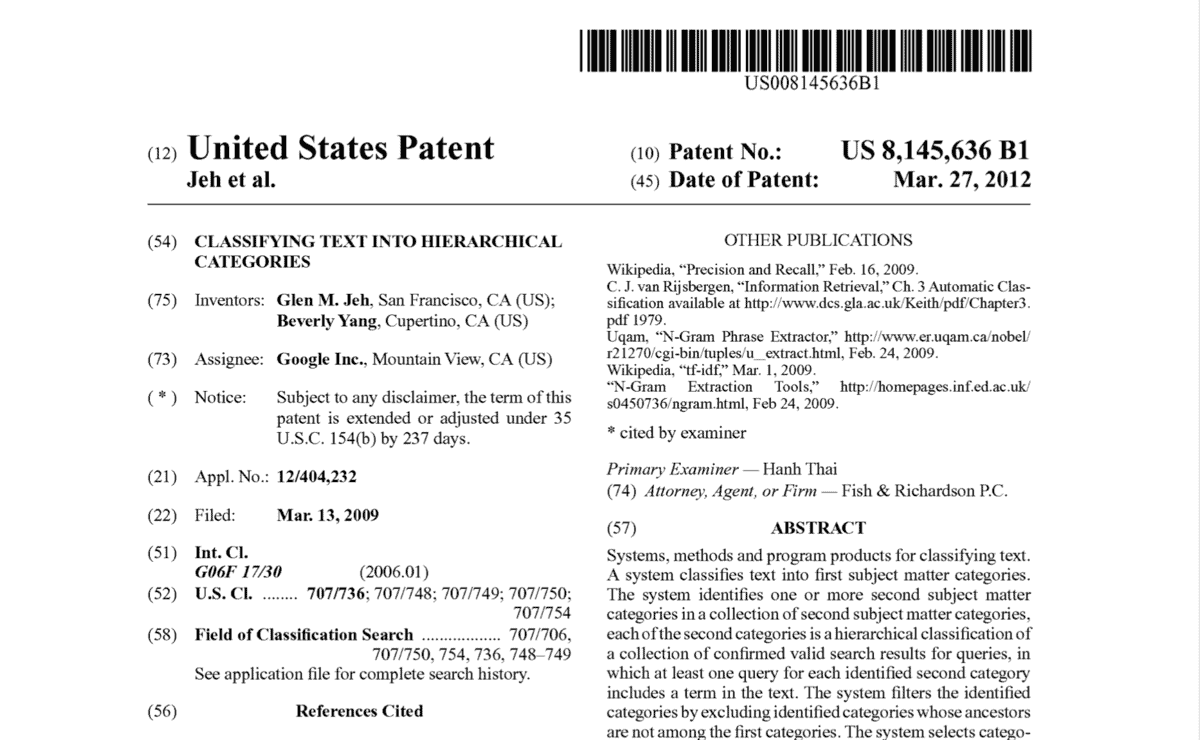

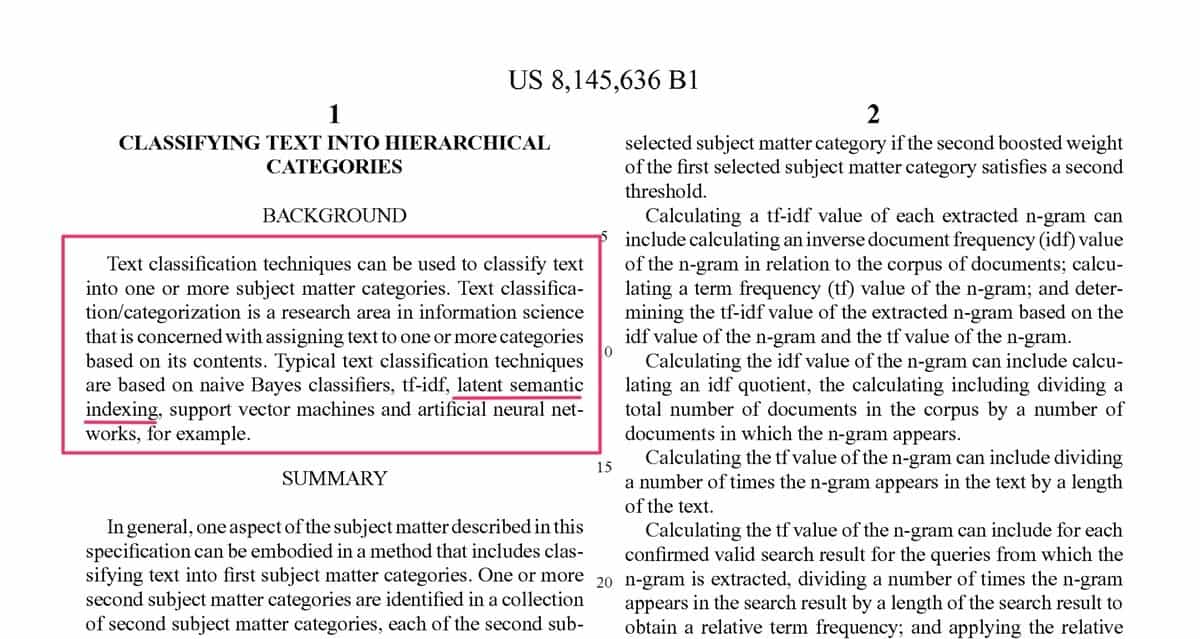

But in March 2009, Google applied for a patent in the US (US 8,145,636 B1). The patent application was titled “Classifying Text Into Hierarchical Categories”.

The application contains this paragraph:

“Text classification techniques can be used to classify text into one or more subject matter categories. Text classification/categorization is a research area in information science that is concerned with assigning text to one or more categories based on its contents. Typical text classification techniques are based on naive Bayes classifiers, tf-idf, latent semantic indexing, support vector machines and artificial neural networks, for example”.

So does Google use latent semantic indexing?

We don’t know for sure.

But it would be extraordinary if it didn’t (and it certainly wouldn’t be because LSI is ‘old technology’).

How Can LSI Help You Rank Better In Google?

There are various ways LSI can help you rank higher in Google. The most important is simply to realize that Google is focused on topics, not keywords.

As I mentioned above, through latent semantic indexing Google is able to map out entire topics and the sub-topics that make up those topics. That, in turn, means that the algorithm can measure how well a piece content covers a particular topic.

To put it another way, Google can measure the topical authority of your piece of content.

Here are some ways to ensure that your content has high topical authority:

Do some topic analysis. Look at the top five search results for your focus keyword and make a note of the topics and sub-topics that those web pages cover. Try to ensure that your content covers more of those topics and sub-topics than any other piece of content

Create topic clusters. Write a core article that covers a topic in broad detail. And then write ‘satellite’ articles that cover sub-topics in more detail.

For example, you could write a core article about British fighter planes of the Second World War. And then you could write a satellite article about Spitfires, another article about Hurricanes, another one about Gloster Gladiators, and so on.

The satellite articles on the individual fighter planes will build out the topical authority of your core article.

Use Google Auto Suggest. Start typing your focus keyword into Google and notice the long tail variations that Google comes up with. Those are all sub-topics that belong to your main topic. Try to include those sub-topics as headings in your article.

Do the same with Google’s ‘People Also Ask’ (usually one third the way down the results page) and Google’s ‘Related Searches’ (at the foot of the results page) – these are all related topics or sub-topics. Include them under headings followed by a few paragraphs, and you’ll boost the topical authority of your article.

Google: There Is No Such Thing As LSI Keywords

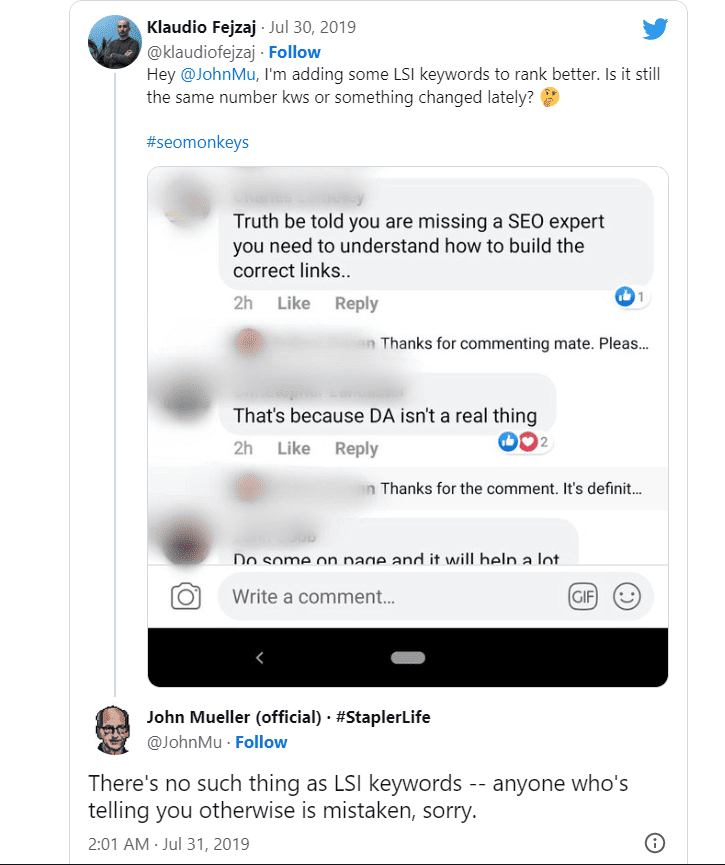

I can’t finish this article without addressing that tweet by John Mueller of July 2019.

Here it is:

What to make of this?

Well firstly, he didn’t say Google doesn’t use latent semantic indexing. And secondly, he may simply have been objecting to the terminology ‘latent semantic keywords’.But is there a group of related words that cluster together in a predictable pattern for the topic you’re writing about? And does Google use those word clusters to identify topics?

I’m willing to bet on it!

Conclusion

Latent semantic indexing is a mathematical method for understanding the meaning of words by studying patterns in the way that words group together in text content.

While there’s no hard evidence that search engines use it, it seems more than likely that they do. Search engines such as Google probably use latent semantic indexing to understand context and to map out topics and sub-topics.

Topical authority is replacing keyword density as a ranking factor. An understanding of latent semantic indexing will help you build topical authority for your articles and your website and rank higher in the search results.

How do I identify the LSI keywords for my SEO strategy?

Hi Rohit,

Thanks for your question.

There are various online tools for finding LSI keywords, such as:

LSIGraph: LSI Keyword Generator (FREE)

https://lsigraph.com/

LSI Keywords Generator Tool – Using LSI For On-Page

https://lsikeywords.com/

Free LSI Keyword Generator Tool

https://www.keysearch.co/tools/lsi-keywords-generator

However, I prefer to find my LSI keywords on Google. Just type your Amin keyword into Google Search and then look at Google Auto Suggest, Google’s ‘People Also Ask’, and Google ‘Related Searches’.

These three methods will all produce words that are closely related to your Amin keyword.

The advantage of getting your LSI words from Google is that because they came from Google, you know that the Google algorithm recognizes these words as being LSI words for your main keyword.

I hope this answers your question.

Best regards,

Rob